AI systems need a trust layer.

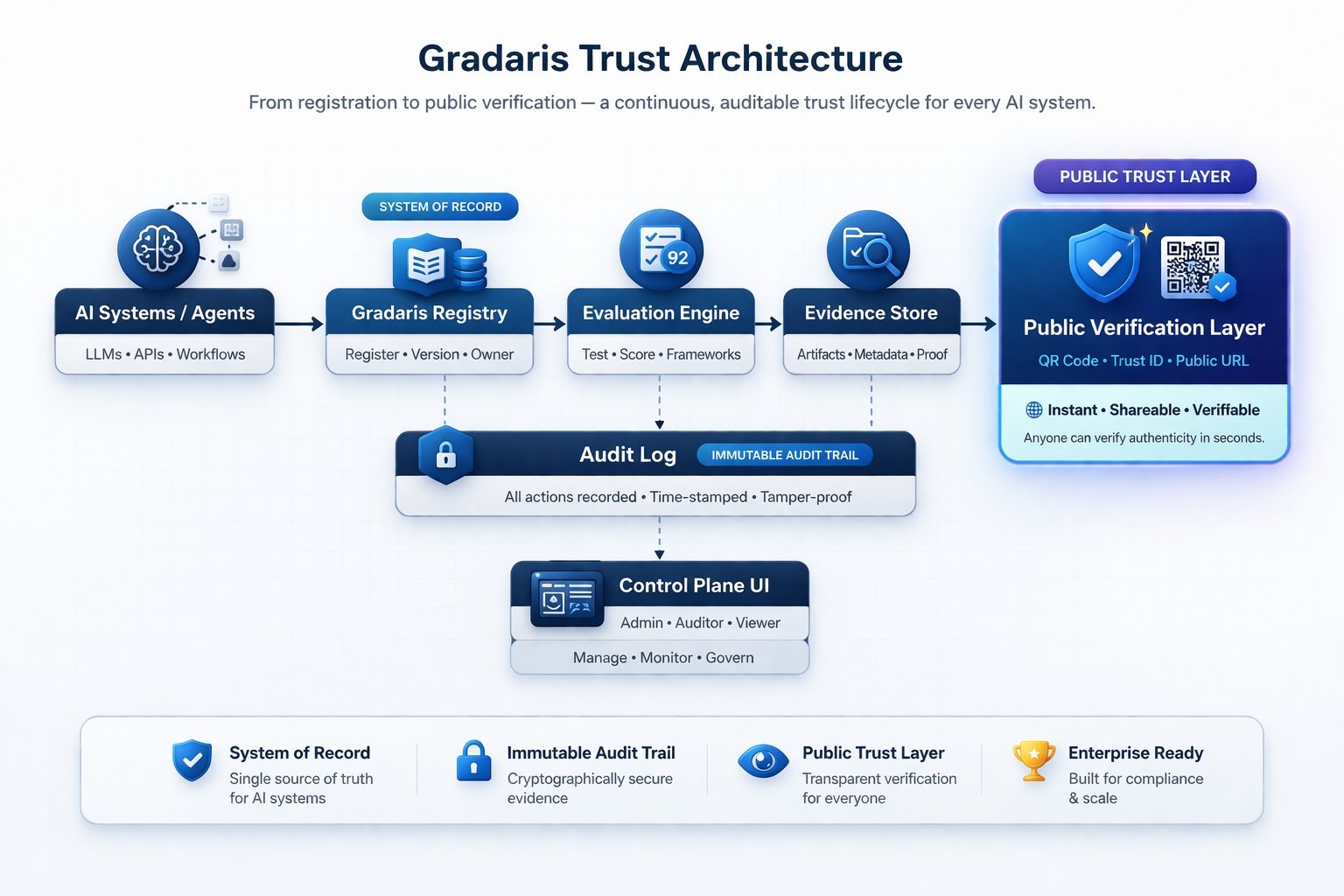

Gradaris is that layer.

Register, assess, and certify every AI system with verifiable Trust IDs, auditable evidence, and public trust validation aligned to the EU AI Act.

No credit card · 2 minutes · Real governance record

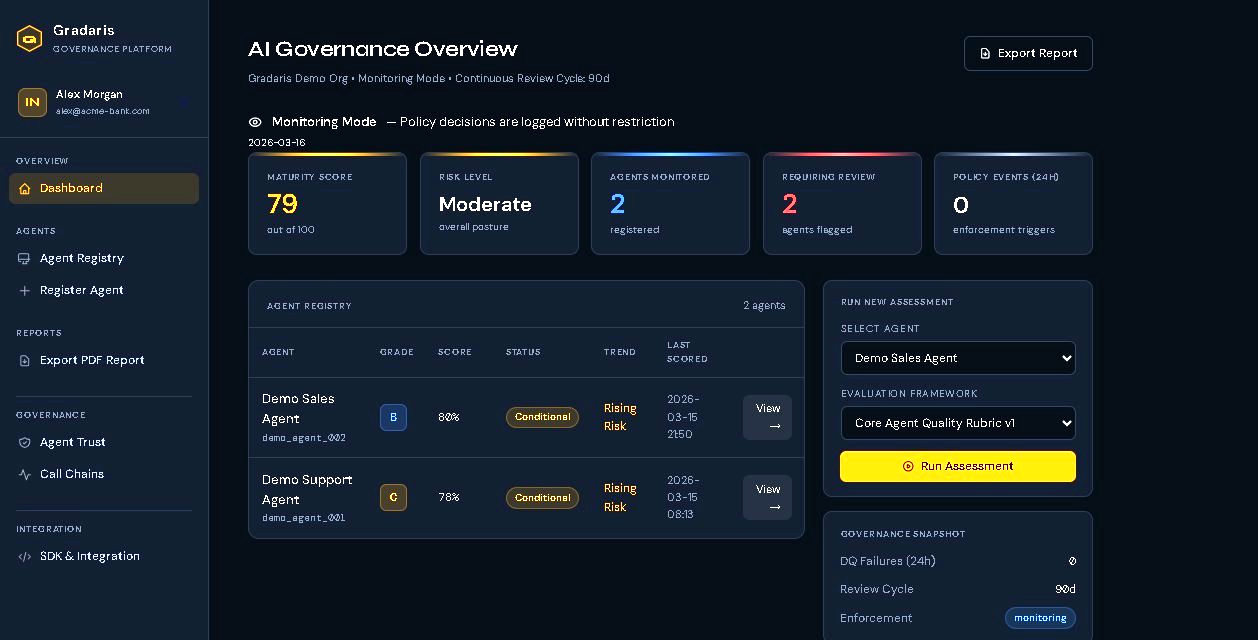

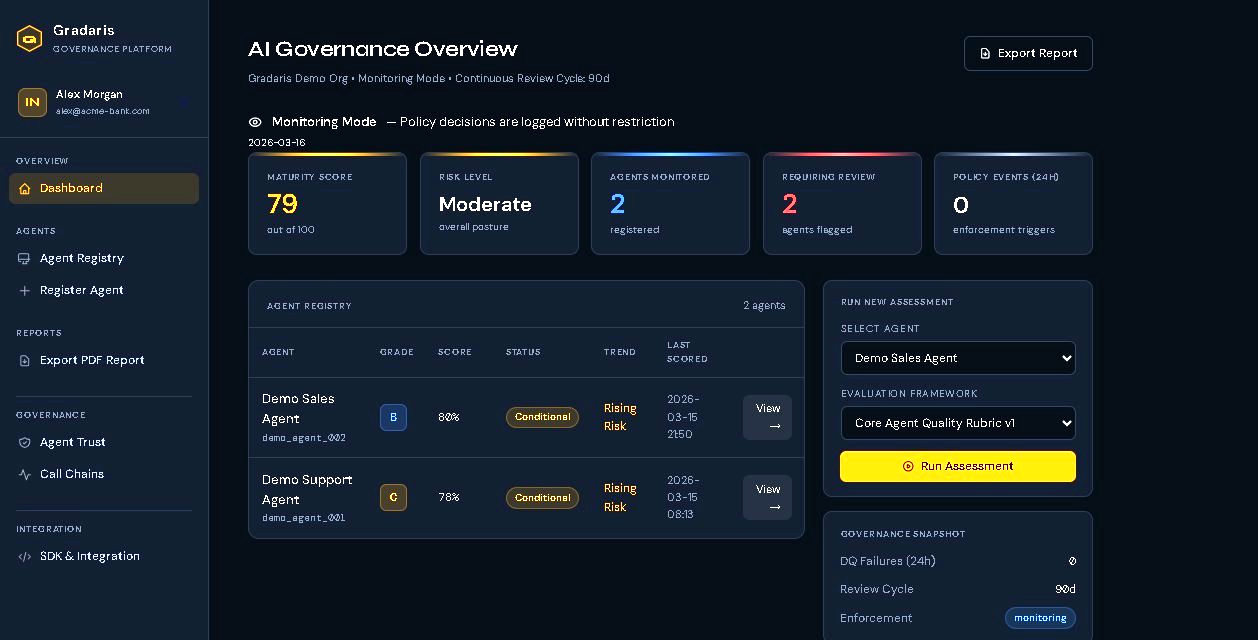

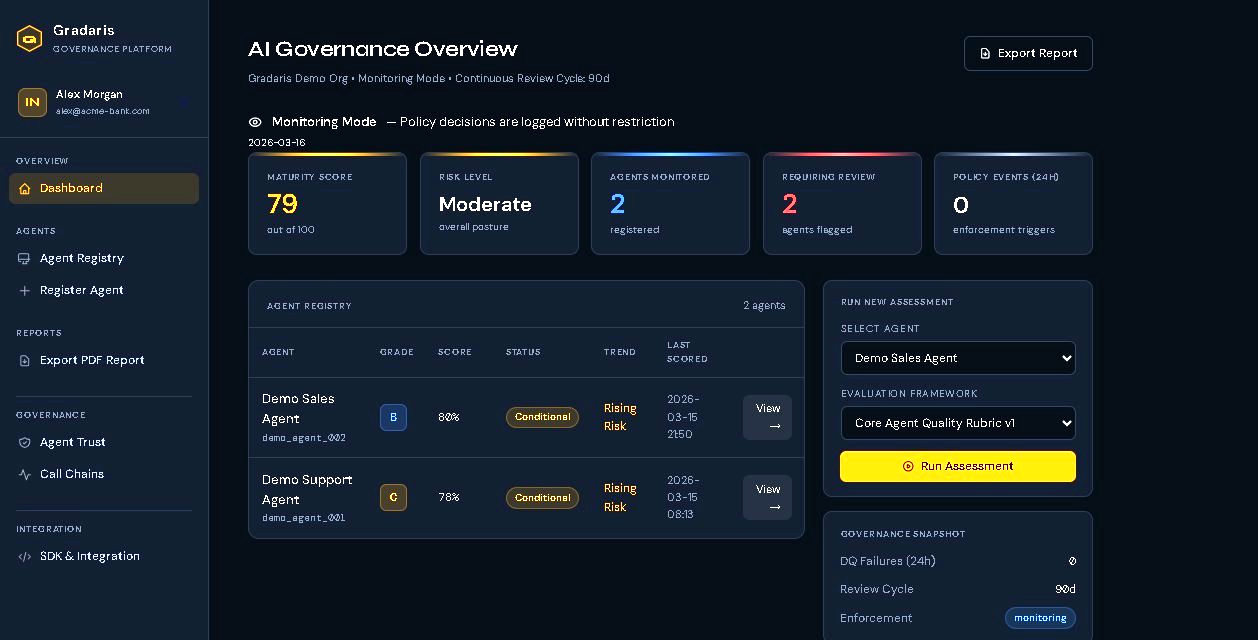

Live control plane showing AI system registry, governance scoring, evidence status, and certification workflow.